- AI experts Simon Lermen and Daniel Paleka's study shows AI can cross-reference trivial details to unmask anonymous social media users.

- The skill barrier for such de-anonymization is now low, requiring only an internet connection and public AI models.

- Conversely, AI systems like AIMS can fabricate thousands of convincing fake profiles with years of activity for espionage.

- This duality forces a re-evaluation of digital trust, blurring lines between real and synthetic identity.

- Mitigation requires platforms to limit data access and users to be more cautious with shared personal information.

AI transforms the threat landscape

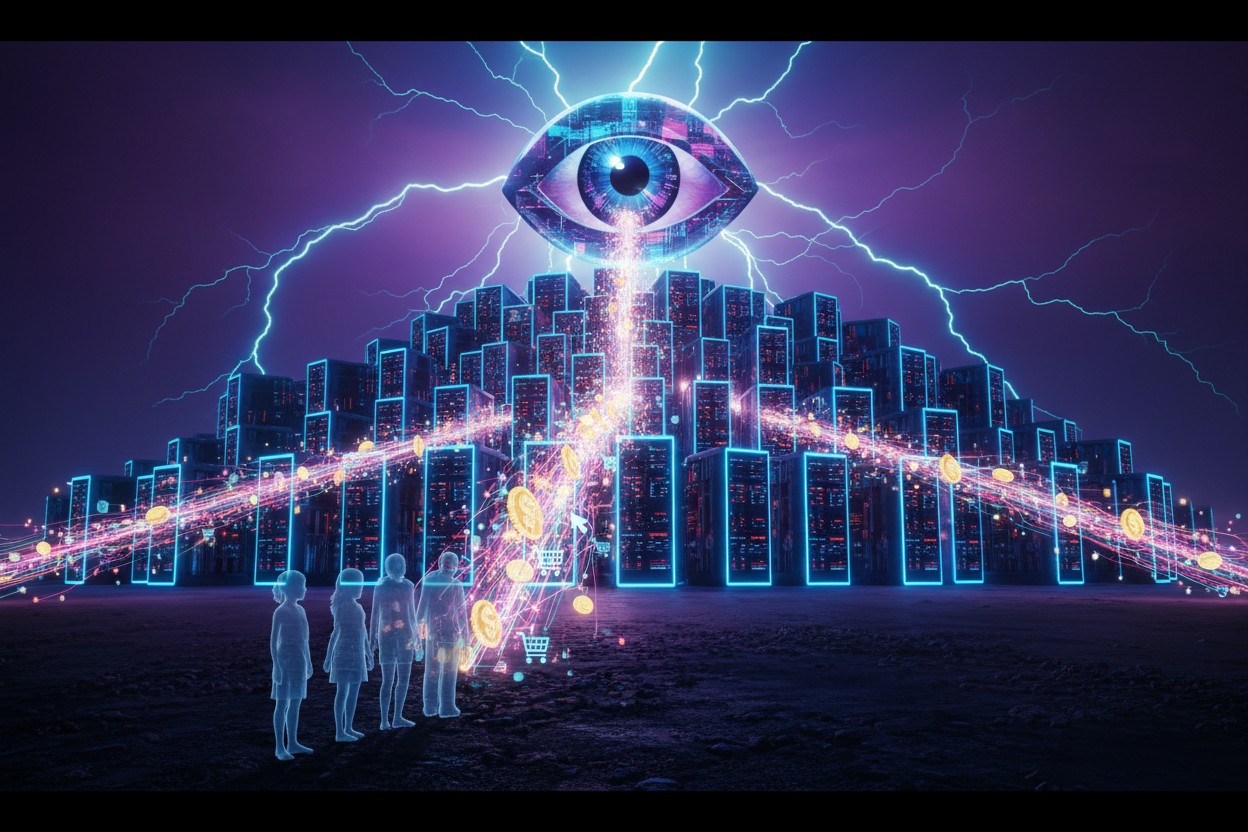

Systems like AIMS showcase the opposite end of the spectrum: AI capable of generating and managing 30,000 legitimate-looking fake online profiles. This technology can fabricate years of social media activity, complete with comments, likes and inter-profile interactions, creating impeccable and entirely fictional, digital backgrounds. As noted by BrightU.AI's Enoch, systems like AIMS represent sophisticated AI-driven influence platforms designed to automate mass deception online. They generate vast networks of fake personas to artificially amplify narratives, manipulate public discourse and fabricate social proof. In espionage, such tools are weaponized to create flawless, long-term digital legends for operatives, complete with simulated histories and interactions. This fundamentally transforms the threat landscape by enabling scalable, persistent and highly credible disinformation campaigns. Where one AI can erase a person's anonymity, another can build a convincing alias from nothing. A spy could use AIMS-like software to create an extensive, credible history of social media activity, complete with seemingly genuine interactions from "friends" and reviews. This duality forces a re-evaluation of digital trust. The very tools that can expose a real person hiding behind a pseudonym can also arm a malicious actor with an army of believable pseudonyms. Faced with these dual threats, mitigation strategies are urgently needed. Lermen suggests social media platforms must take proactive measures by limiting data access. This could involve implementing rate limits on user data downloads, detecting automated scraping bots and restricting bulk data exports. He also stresses the need for individual users to be more cautious about the personal information they share online. The concurrent rise of AI as both a master unmasker and a master forger signals a pivotal moment. We are entering an era where the line between real and synthetic identity is blurred, demanding new frameworks for digital verification, privacy and security. The question is no longer just how to protect our online selves, but how to verify that anyone we interact with online has a self to begin with. Watch this video about the future of AI. This video is from the Brighteon Highlights channel on Brighteon.com. Sources include: Technocracy.news NewsBytesApp.com Brighteon.com BrightU.aiDemocrats demand Trump disclose Israel’s nuclear arsenal, citing escalation risks in Iran war

By Willow Tohi // Share

The Knowledge Apocalypse: When the machines learn to lie, only the truth will set us free

By Belle Carter // Share

U.S. Approves $374 Million Sale of Bomb Kits to Ukraine

By Garrison Vance // Share

Cruise Ship With Hantavirus Outbreak Allowed to Dock in Spain’s Canary Islands

By Douglas Harrington // Share

The Silicon Tide: A guide to breaking the digital chains

By Ramon Tomey // Share

Iran’s Foreign Minister Rules Out Military Solution to Hormuz Crisis

By Garrison Vance // Share

Big Toothpaste’s dirty secret: 90% of brands contain cancer-causing heavy metals

By willowt // Share

The Void Age Bootstrap Protocol: How dark factories will recalibrate when the old world collapses

By ramontomeydw // Share

China's Honghu T70 Fully Autonomous Electric Tractor Attracts Online Attention

By edisonreed // Share

Study: Big Tech Can Extract $1 Million Per Person from Personal Data Over Lifetime

By edisonreed // Share